At Kingland, we use a variety of Artificial Intelligence (AI) techniques for text analysis as we build text analytics and enterprise data management solutions. One of the most sophisticated is the neural network. Inspired by (but certainly not the same as) human biology, neural  networks can learn to answer questions about complex data by observing and storing examples of subtle patterns in the connections between nodes, which are loosely analogous to neurons in the human brain. Most neural networks have multiple layers, with information flowing from one layer to the next (and back again in some cases), often transforming the input into more and more abstract representations as it progresses through the layers.

networks can learn to answer questions about complex data by observing and storing examples of subtle patterns in the connections between nodes, which are loosely analogous to neurons in the human brain. Most neural networks have multiple layers, with information flowing from one layer to the next (and back again in some cases), often transforming the input into more and more abstract representations as it progresses through the layers.

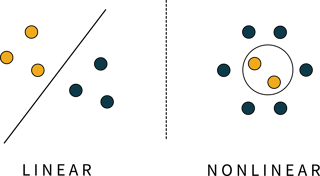

One of the most useful features of a neural network model is its ability, when correctly configured, to learn non-linear functions. The figure to the left illustrates the difference between linear and non-linear models.

Both sections of the figure show a decision problem where we would like to use a model to distinguish between the yellow and blue dots. The left-hand section shows a linear problem that is easily handled by a linear model (the diagonal black line). However, in the right-hand image, no linear function–no straight line–can separate the yellow dots from the blue. Instead, a non-linear function is needed, and the black circle represents just that.

Many problems we find in text analysis are non-linear in nature and thus less tenable to linear techniques. Nevertheless, a linear classification model called a support vector machine (SVM) is often a good baseline against which to compare non-linear models. Support vector machines use a clever trick to render non-linear problem sets "more" linear; intuitively, they pull the data points into higher-dimensional space. In the non-linear example above, if the yellow dots floated up off the screen, it would suddenly be easy to put a two-dimensional plane (a linear construct) between them and the blue dots. Support vector machines attempt to do this. We will not discuss them in further detail here, but it's useful to know SVMs are often used as a measuring stick to see whether higher-order models are even useful for a particular problem.

This list is hardly exhaustive, but it illustrates the neural networks we have used for various tasks as we "teach software to read" unstructured or semi-structured data. These are broad classes of models, and there are unlimited ways to formulate a model within a particular class. We call this formulation the network topology because it defines the shape–the connectivity and plurality–of the nodes in the network. The choice of network topology is key to building a successful model, and there is no limit on how complex these can be. Usually, a model involves X layers containing Y nodes each, connected in whatever way is advantageous for the problem.

Fully-connected models are perhaps the simplest multi-layer neural networks. In these models, the "neurons," or nodes, in one layer are all connected to all of the nodes in the next layer. These networks are good at classification tasks, especially those without spatial or temporal dependencies among the inputs. Such a model would be a good choice taking in statistics about a patient's symptoms and giving a Yes or No answer to the question of whether that patient has a particular illness.

Convolutional models are not fully connected. Instead, connections from one layer to the next are made selectively, allowing small groups of nodes to become experts at identifying certain features. These experts are then shuffled across the entire input, and they pass on their findings to higher-level decision makers. Such models are very good at image classification because they can, for example, identify the shape of a cat no matter where in the input photograph the cat appears. By contrast, a fully-connected model may only learn to identify cats that are located and oriented approximately the same way as they appeared in the training images it saw.

We use convolutional models by first transforming the individual words of the text into a numeric form that represents the words' meanings, then treating those numeric inputs just like an image and looking for interesting features using convolutional networks.

Recurrent models don't only pass information from input to output; they have the ability to pass information backward as well. By doing so, they obtain the ability to remember information from previous inputs and apply it to subsequent inputs, which makes them excellent at learning long-term dependencies.

An example of a long-term dependency is opening and closing parentheses. Consider the following:

|

|

If the network sees only one word at a time, how would it learn where to find the closing parenthesis? By the time it sees the word "correctly," the fact the word "when" had an opening parenthesis is lost to the past. Recurrent networks use feedback loops to store information about that parenthesis to inform downstream decisions.

We use models like this for unary classification, or text classification where the two classes are "is" or "isn't." Typically, we classify information into one or more categories, with training examples from each category. But if we really only want to know if a piece of information came from Category A, or didn't come from Category A, it's likely we only have training examples for Category A.

So how do we come up with examples that aren't Category A when those examples could be absolutely any text of any kind? It turns out recurrent models can learn language models, which are statistical representations of the patterns present in language. We can train a language model to be familiar with–and only with–Category A language. Thus, when we have an unknown piece of text we wish to classify, we simply see how much the language model agrees with the unknown text. If the level of agreement is high, we can say the text is from Category A. If not, we can say the text is some other kind of language that isn't Category A.

These are just a few ways we use neural networks to help clients discover what's important. Watch this video to see how our AI engines help clients collect data and simplify business decisions.

These Stories on Text Analytics

No Comments Yet

Let us know what you think