What's your data worth? A few years ago I would hear this question come up fairly regularly in the financial services industry. Over the last number of years though, the topic of "valuing data" has been pretty quiet as many executives have been more focused on the pressures that new regulations and evolving market risks have placed on their data programs.

Earlier this year the Harvard Business Review published an article titled Do Know You Know What Your Company's Data is Worth? The authors introduce the concept enterprise value of data (EvD) and raise a variety of points, predominantly about how you can value data as an intangible asset or think about the impact data has on the company. They even take it to an extreme of assessing or valuing the impact on the business if the business didn't have access to its data. "To analyze EvD, determining the relative importance of data to an enterprise’s balance sheet, its ability to effectively compete, and its operational capabilities is a good place to start. This can be achieved not only by placing a dollar value on specific transactions, operations and divisions of a firm, but by imagining how that value may grow over time. In order to establish a basic EvD estimation, firms can work backwards from a total shutdown scenario, where systems and therefore the ability to use data are no longer functional."

Can you imagine that in a financial services setting? What would the impact be if...

It's pretty clear, this "total shutdown scenario" is an interesting exercise in exploring the value of data. But why look at value? If I'm valuing a company this may be an interesting financial exercise, but for today's Chief Data Officers, I believe that this is more than an interesting exercise. I suggest that value is one of the lenses that should focus on the costs that go into a data program.

What makes the total shutdown scenario so useful is that is helps us identify which data is most important to a business (often referred to as "critical data"). In our example, if a business would be crippled without access to its client data, then client data is critical. If client data is critical, that means it's likely worth the investment to govern, manage, and maintain this data. But at what cost? In our view, data quality is the key to the cost - value equation.

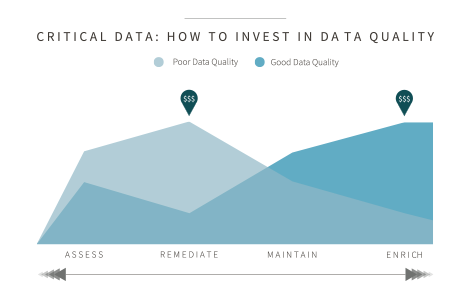

As we meet with executives, we regularly discuss the four categories of data quality management: assess, cleanse, maintain, and enrich. In discussions with many executives, most large enterprises spend at least 80% of their time assessing data quality and cleansing or remediating data errors or deficiencies to improve data quality. Is this the right amount? It might be, if the company is spending this time and money on the most critical data - the data that has the highest value.

This link between data quality, value, and cost is an important one. We suggest using the data quality framework and this notion of value as a "tool" for planning investments and on-going operational expenses in data management. In the tables/graphics shown, the simple question is an assessment of data quality. Depending on the overall quality of data, there should be different investment postures.

For example, if data quality is low, the best investment of time and money is on the most critical data. Data quality must be assessed to ensure a complete understanding of quality issues. If there are issues and the data is critical (remember our total shutdown scenario), these are the data quality issues that must be remediated to repair the data asset. Additional investment should then also be placed in the maintenance of this data to ensure we don't have to complete the same remediation project again in the near future. Finally, any remaining budget can be spent on enrichment, or augmenting the critical data with other data attributes to make it more useful for the business.

If data quality is high, the investment changes slightly. Job one in a high quality situation is to make sure maintenance is active and working well. This may require people, or in today's world cognitive technologies, and other unique data sources can be used. The counterbalance to this focus on maintenance is continued assessment. If there are data quality issues, some amount of investment can be spent on remediation. This scenario then allows more investment on the enrichment of data with unique data sets or cross-referencing to be able to use the data to generate even more value.

I've watched companies invest tens of millions of dollars in data programs over the last number of years and there has been tremendous progress across the industries we serve. As we look to the future, I believe the executives who prioritize the value of their major data sets and use advanced technologies to assess, remediate, enrich, and maintain can deliver more value to their organizations.

These Stories on Enterprise Data Management

No Comments Yet

Let us know what you think